Category | Quality Management

Last Updated On 11/02/2026

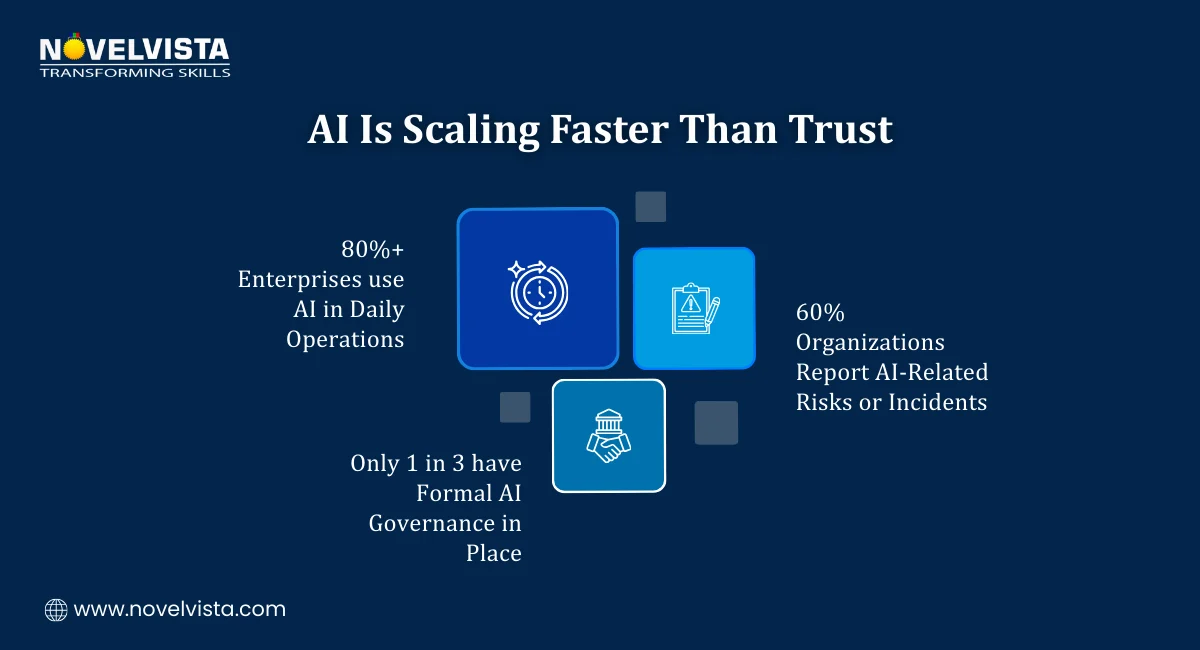

Artificial Intelligence is no longer experimental. According to recent industry studies, over 75% of enterprises are already using AI in at least one business function, and global AI spending is projected to cross $500 billion annually within the next few years. Yet, alongside rapid adoption, AI-related incidents from biased algorithms to opaque decision-making are increasing just as fast.

This is where ISO 42001 Responsible AI Principles step in.

As governments tighten regulations and customers demand transparency, organizations can no longer afford “black box” AI systems. Responsible AI is now a business, legal, and ethical necessity not a buzzword. ISO/IEC 42001 provides the first internationally recognized management system standard designed specifically to govern AI responsibly.

So who is this guide for?

If you’re a business leader deploying AI, a compliance professional managing risk, an IT manager overseeing AI systems, or a governance professional trying to stay ahead of regulation this guide is for you.

Let’s break down what ISO 42001 is, why it matters, and how ISO 42001 Responsible AI Principles help organizations build trustworthy, compliant, and sustainable AI systems.

ISO/IEC 42001 is the world’s first AI management system standard, designed to help organizations build, operate, and continuously improve responsible AI practices. Rather than focusing on technical model design, ISO 42001 emphasizes governance, accountability, oversight, and AI risk management across the entire AI lifecycle from development and deployment to ongoing monitoring. It also aligns seamlessly with established standards such as ISO 27001 for information security, ISO 31000 for risk management, and ISO 22301 for business continuity. Together, these standards create a unified governance framework where AI is managed as a strategic business capability, not an uncontrolled experiment.

At the heart of the standard are the ISO 42001 Responsible AI Principles. These principles define how AI systems should be designed, used, and governed to ensure ethical, transparent, and accountable outcomes.

Rather than dictating specific technologies, ISO 42001 focuses on how decisions are made, who is responsible, and how risks are identified and controlled. This makes the standard flexible enough to apply across industries, geographies, and AI maturity levels.

These principles are closely aligned with globally recognized AI governance principles, making ISO 42001 a practical and internationally accepted framework.

Understand ISO 42001 exam structure, clauses, and key focus areas Learn smart study tips to master Responsible AI and AI governance concepts Boost your confidence with practical insights aligned to the exam syllabus

One of the most critical ISO 42001 Responsible AI Principles is accountability. AI systems must have clear ownership. Someone often a role, not just an individual, must be responsible for AI outcomes.

This includes:

Without accountability, AI risks escalate quickly and silently.

Transparency ensures that AI systems are not “black boxes.” Organizations should be able to explain:

ISO 42001 Responsible AI Principles emphasize documentation, traceability, and explainability especially for high-impact AI systems that affect people, finances, or safety.

Bias in AI is not just a technical flaw it’s a governance failure. ISO 42001 requires organizations to actively identify and mitigate bias throughout the AI lifecycle.

This includes:

Fairness is a core part of both AI governance principles and responsible AI adoption.

AI systems must perform reliably and safely under expected conditions. ISO 42001 Responsible AI Principles stress:

This aligns closely with AI risk management, ensuring AI failures do not disrupt business operations or cause harm.

AI should support human decision-making not replace it blindly. ISO 42001 promotes human-in-the-loop or human-on-the-loop mechanisms, particularly for high-risk AI use cases.

Human oversight ensures:

ISO 42001 does not exist in isolation; it translates widely accepted AI governance principles into a practical, auditable management system. It covers essential elements such as defined AI policies and objectives, governance committees or review boards, measurable performance metrics and controls, and regular internal audits and management reviews. By embedding AI governance within existing enterprise governance structures, organizations ensure consistent oversight and avoid fragmented or ad-hoc approaches to managing AI systems.

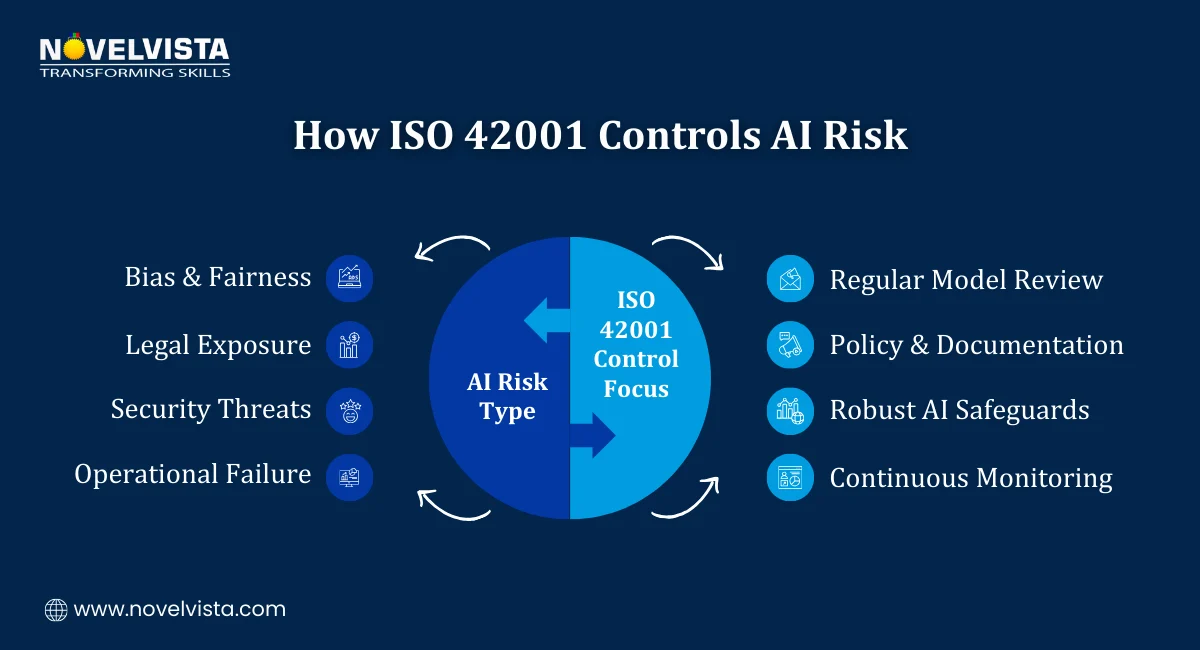

Effective AI risk management is a core requirement of ISO 42001, requiring organizations to systematically identify, assess, treat, and monitor AI-specific risks throughout the AI lifecycle. These risks commonly include bias and discrimination, poor data quality, security vulnerabilities, model drift and performance degradation, and legal or regulatory non-compliance. By embedding AI risk management into everyday operations, ISO 42001 ensures risks are continuously reviewed and controlled not just addressed after incidents occur.

Organizations that adopt ISO 42001 Responsible AI Principles gain tangible benefits:

Responsible AI is quickly becoming a competitive advantage.

Many AI frameworks exist, including:

What makes ISO 42001 different is its certifiable, management-system-based approach. It translates high-level ethics into operational controls that organizations can implement, measure, and audit.

For global organizations, ISO 42001 provides consistency across regions and regulatory landscapes.

Getting started with ISO 42001 Responsible AI Principles doesn’t require rebuilding everything from scratch.

A practical approach includes:

Early adoption helps organizations stay ahead of compliance requirements.

As AI regulation accelerates worldwide, responsible AI will shift from “best practice” to baseline expectation. ISO 42001 Responsible AI Principles are likely to become a reference point for regulators, customers, and auditors alike.

Organizations that act now will be better positioned to scale AI confidently, ethically, and sustainably.

The rise of AI brings unprecedented opportunity but it also demands accountability. As AI systems increasingly influence decisions, outcomes, and trust, organizations can no longer rely on informal controls or after-the-fact fixes. ISO 42001 Responsible AI Principles offer a structured, globally recognized framework to govern AI with clarity, consistency, and confidence.

By embedding strong AI governance principles and disciplined AI risk management into everyday operations, organizations move beyond experimentation to sustainable, responsible AI adoption. The result is AI that delivers value without compromising ethics, transparency, or control.

Responsible AI is no longer a future goal it is a present-day expectation. ISO 42001 doesn’t just define what responsible AI should look like; it shows organizations exactly how to achieve it.

Ready to strengthen your Responsible AI expertise?

The ISO/IEC 42001 Lead Auditor Certification Training by NovelVista is designed for professionals who want to move beyond theory and confidently assess, audit, and improve AI management systems. This course equips you with practical auditing skills, hands-on understanding of ISO 42001 Responsible AI Principles, and real-world insights into AI governance principles and AI risk management. Ideal for AI governance leaders, auditors, compliance professionals, and risk managers, it also provides a globally recognized credential to support your career growth.

Start your ISO 42001 Lead Auditor journey today and lead responsible AI with confidence.

Author Details

Course Related To This blog

ISO 42001 Lead Auditor

Confused About Certification?

Get Free Consultation Call

Stay ahead of the curve by tapping into the latest emerging trends and transforming your subscription into a powerful resource. Maximize every feature, unlock exclusive benefits, and ensure you're always one step ahead in your journey to success.